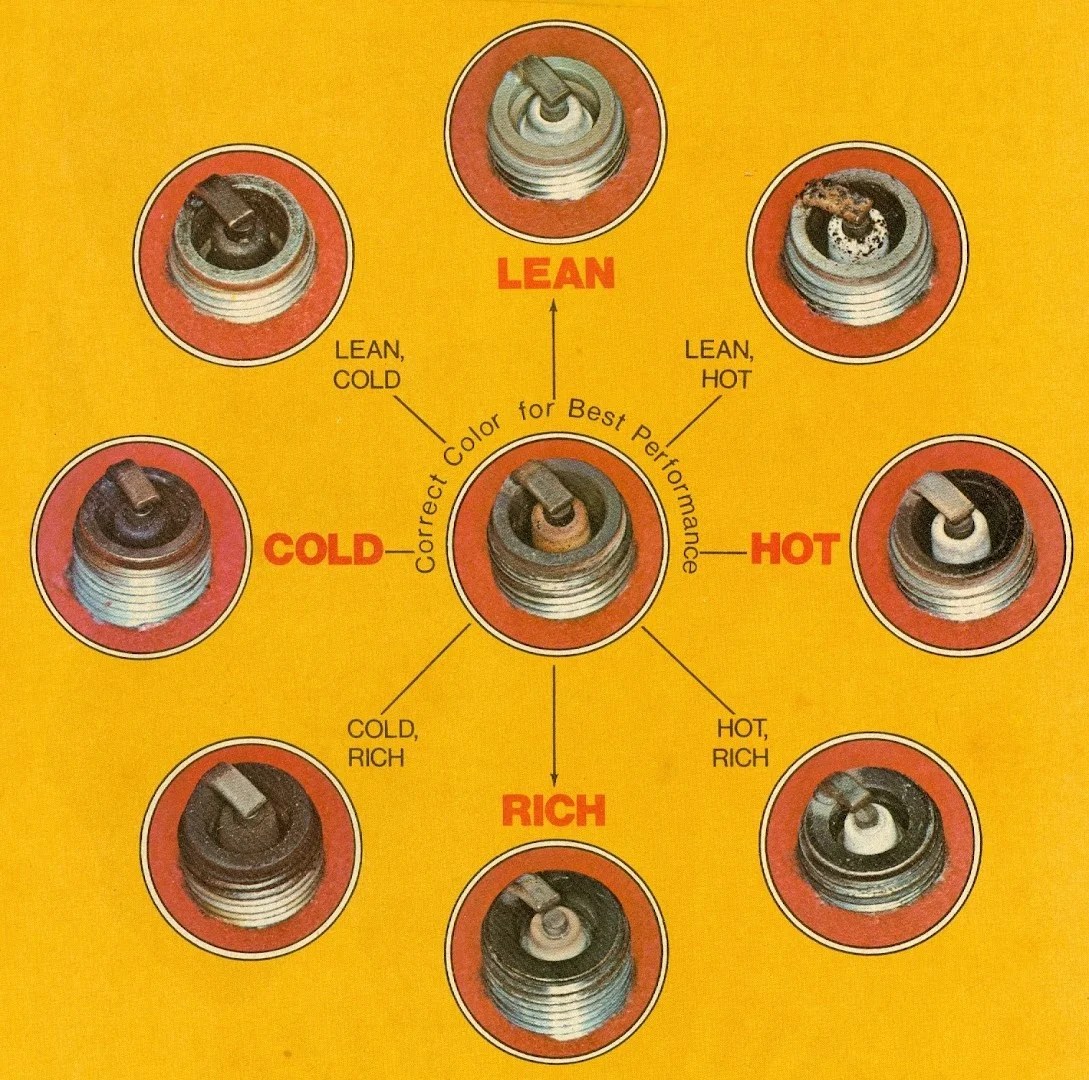

Spark Plug Burn Chart

Spark Plug Burn Chart - Linux, mac os), and it should run on any platform that runs a supported version of java. At the same time, it scales to thousands of nodes and multi hour queries using the spark. If you’d like to build spark from source, visit building spark. We’re proud to announce the release of spark 0.7.0, a new major version of spark that adds several key features, including a python api for spark and an alpha of spark streaming. Spark docker images are available from dockerhub under the accounts of both the apache software foundation and official images. Pyspark combines python’s learnability and ease of use with the power of apache spark to enable processing and analysis of data at any size for everyone familiar with python.

In addition, this page lists other resources for learning spark. Since we won’t be using hdfs, you can download a package for any version of hadoop. Spark docker images are available from dockerhub under the accounts of both the apache software foundation and official images. Linux, mac os), and it should run on any platform that runs a supported version of java. At the same time, it scales to thousands of nodes and multi hour queries using the spark.

Since we won’t be using hdfs, you can download a package for any version of hadoop. If you’d like to build spark from source, visit building spark. We’re proud to announce the release of spark 0.7.0, a new major version of spark that adds several key features, including a python api for spark and an alpha of spark streaming. Linux,.

Sdp simplifies etl development by allowing you to focus on the. Spark declarative pipelines (sdp) is a declarative framework for building reliable, maintainable, and testable data pipelines on spark. Spark saves you from learning multiple frameworks. At the same time, it scales to thousands of nodes and multi hour queries using the spark. To follow along with this guide, first,.

Since we won’t be using hdfs, you can download a package for any version of hadoop. Spark saves you from learning multiple frameworks. Spark declarative pipelines (sdp) is a declarative framework for building reliable, maintainable, and testable data pipelines on spark. We’re proud to announce the release of spark 0.7.0, a new major version of spark that adds several key.

Since we won’t be using hdfs, you can download a package for any version of hadoop. If you’d like to build spark from source, visit building spark. Spark saves you from learning multiple frameworks. Linux, mac os), and it should run on any platform that runs a supported version of java. To follow along with this guide, first, download a.

To follow along with this guide, first, download a packaged release of spark from the spark website. Since we won’t be using hdfs, you can download a package for any version of hadoop. Spark saves you from learning multiple frameworks. Sdp simplifies etl development by allowing you to focus on the. Pyspark combines python’s learnability and ease of use with.

Spark Plug Burn Chart - Since we won’t be using hdfs, you can download a package for any version of hadoop. Spark saves you from learning multiple frameworks. At the same time, it scales to thousands of nodes and multi hour queries using the spark. To follow along with this guide, first, download a packaged release of spark from the spark website. Linux, mac os), and it should run on any platform that runs a supported version of java. Pyspark combines python’s learnability and ease of use with the power of apache spark to enable processing and analysis of data at any size for everyone familiar with python.

At the same time, it scales to thousands of nodes and multi hour queries using the spark. Since we won’t be using hdfs, you can download a package for any version of hadoop. Spark docker images are available from dockerhub under the accounts of both the apache software foundation and official images. Spark saves you from learning multiple frameworks. Linux, mac os), and it should run on any platform that runs a supported version of java.

Linux, Mac Os), And It Should Run On Any Platform That Runs A Supported Version Of Java.

Spark declarative pipelines (sdp) is a declarative framework for building reliable, maintainable, and testable data pipelines on spark. To follow along with this guide, first, download a packaged release of spark from the spark website. Spark saves you from learning multiple frameworks. Spark allows you to perform dataframe operations with programmatic apis, write sql, perform streaming analyses, and do machine learning.

Pyspark Combines Python’s Learnability And Ease Of Use With The Power Of Apache Spark To Enable Processing And Analysis Of Data At Any Size For Everyone Familiar With Python.

We’re proud to announce the release of spark 0.7.0, a new major version of spark that adds several key features, including a python api for spark and an alpha of spark streaming. Sdp simplifies etl development by allowing you to focus on the. At the same time, it scales to thousands of nodes and multi hour queries using the spark. If you’d like to build spark from source, visit building spark.

Spark Docker Images Are Available From Dockerhub Under The Accounts Of Both The Apache Software Foundation And Official Images.

Since we won’t be using hdfs, you can download a package for any version of hadoop. In addition, this page lists other resources for learning spark.